Вход / Регистрация

23.12.2024, 00:22

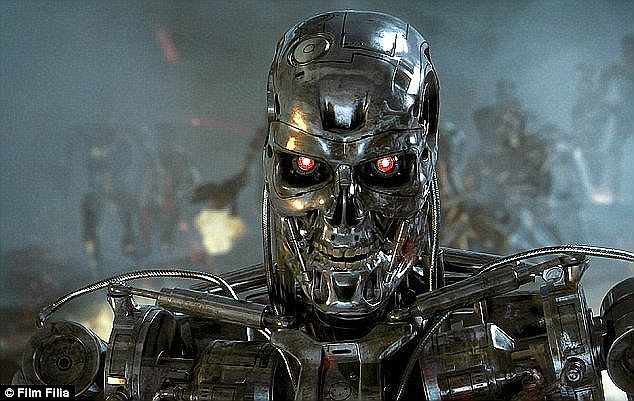

Главная » 2015 Май 28 » Humans will be left 'defenceless' by killer drones

12:29 Humans will be left 'defenceless' by killer drones |

Humans could be left 'utterly defenceless' by small and agile flying robots that think for themselves and are designed to kill, a leading US computer science expert has warned. He said that drones will be limited only by their physical abilities, and not by the shortcomings of artificial intelligence (AI). And he called on experts to take a stance in order to prevent the development of such unstoppable killing machines. The deadly drones are the likely 'endpoint' of the current technological march towards lethal autonomous weapons systems (Laws), according to Professor Stuart Russell from the University of California at Berkeley. Such weapons, whose decisions about which targets to select and destroy are determined by AI rather than humans, could feasibly be deployed within the next decade, said the professor. He added: 'The stakes are high: Laws have been described as the third revolution in warfare, after gunpowder and nuclear arms.' Writing in a comment article published in the journal Nature, Professor Russell argues that the capabilities of Laws will be limited more by the physical constraints of range, speed and payload, than any deficiencies in their controlling AI systems. He continued: 'As flying robots become smaller, their manoeuvrability increases and their ability to be targeted decreases. 'They have a shorter range, yet they must be large enough to carry a lethal payload - perhaps a one-gram shaped charge to puncture the human cranium. 'Despite the limits imposed by physics, one can expect platforms deployed in the millions, the agility and lethality of which will leave humans utterly defenceless. This is not a desirable future.' Proffesor Russell called on his peers - AI and robotics scientists - and professional scientific organisations to take a position on Laws, just as physicists did over nuclear weapons and biologists over the use of disease agents in warfare. 'Doing nothing is a vote in favour of continued development and deployment,' he cautioned. Some have argued for an international treaty limiting autonomous weapons or banning them altogether. But the three countries at the forefront of the technology - the US, UK and Israel - all insist they have internal weapons review processes that ensure compliance with international law, making such a treaty unnecessary, said Proffesor Russell. The United Nations has held a number of meetings on Laws under the auspices of the Convention on Certain Conventional Weapons (CCW) in Geneva. At the latest meeting in April, attended by Professor Russell, several countries - notably Germany and Japan - demonstrated strong opposition to lethal autonomous weapons. Almost all states agreed with the need for 'meaningful human control' over targeting and engagement decisions made by the machines. 'Unfortunately the meaning of "meaningful" is still to be determined,' said Professor Russell. He stressed that it was 'difficult or impossible' for current AI systems to satisfy the subjective requirements of the 1949 Geneva Convention on humane conduct in war, which emphasise the need for military necessity, discrimination between combatants and non-combatants, and proper regard for the potential of collateral damage. Professor Russell added: 'Laws could violate fundamental principles of human dignity by allowing machines to choose who to kill - for example they might be tasked to eliminate anyone exhibiting "threatening behaviour". 'The potential for Laws technologies to bleed over into peacetime policing functions is evident to human rights organisations and drone manufacturers. 'In my view, the overriding concern should be the probable endpoint of this technological trajectory.' |

| Категория: Technology | Просмотров: 1206 | |

| Всего комментариев: 0 | |